How to Build a Scientific Literature-Review Agent Without Getting Rate-Limited

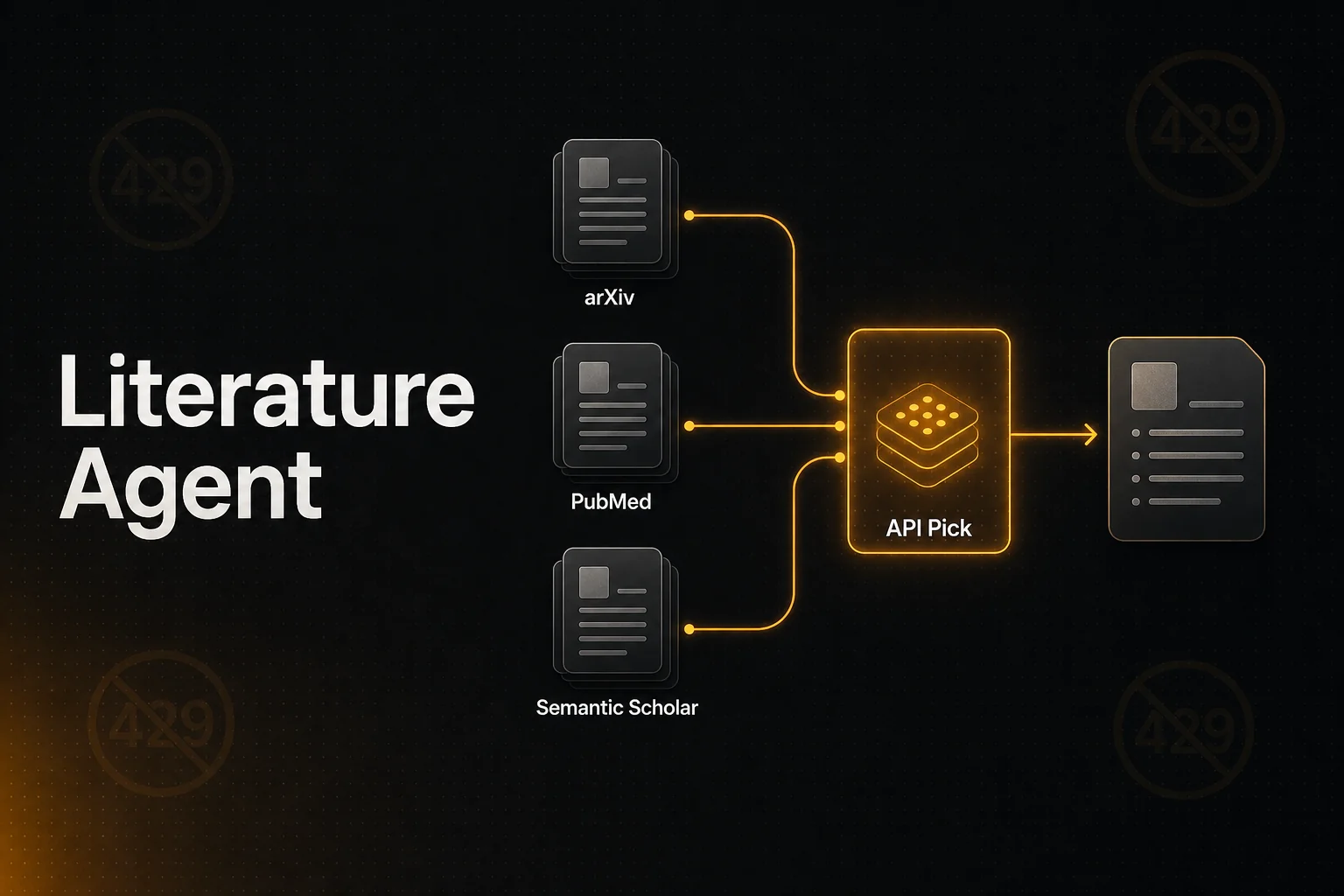

Build a literature-review agent on raw arXiv + PubMed + Semantic Scholar today and you'll hit 429s before you finish ten papers. Here's why the rate limits got worse, what PaperQA / Undermind actually do under the hood, and a working pattern that survives a real review session.

TL;DR

- •arXiv began throttling aggressively in late 2024 after LLM scrapers triggered 429 storms; the documented limit is 1 request every 3 seconds, but real-world burst tolerance is lower.

- •Semantic Scholar's 1,000 req/sec is shared across all unauthenticated callers — effectively unusable for production agents.

- •PubMed E-utils caps unauthenticated traffic at 3 req/sec; with an API key, 10 req/sec.

- •Working agents (PaperQA2, Undermind, Elicit) all use a proxy / cache layer in front of these sources.

- •API Pick Academic Search wraps arXiv, PubMed, bioRxiv, and medRxiv in one rate-managed endpoint — 5 credits per call, only-on-success.

The problem in one paragraph

Free academic APIs are wonderful and they're being eaten alive. arXiv, PubMed, and Semantic Scholar were designed in an era when 'one researcher writes a script that polls every few seconds' was the worst-case load. Now every undergraduate with Python writes an LLM agent that fans out fifty parallel calls to read one paper's references. Multiply by thousands of similar agents and you get the pattern arXiv staff describe on X as the "arXiv-pocalypse" of late 2024 — sustained Rate Exceeded responses, degraded service, and a wave of new throttling rules that hit indie builders harder than the bad actors.

The result: a literature-review agent that works on five papers in dev environment falls over halfway through a real review session. The user gets a half-finished answer with three citations instead of fifteen.

This article is about how to build the agent so that doesn't happen.

What's actually rate-limited and where

| arXiv | PubMed E-utils | Semantic Scholar | OpenAlex | API Pick Academic | |

|---|---|---|---|---|---|

| Coverage | Physics, math, CS, bio preprints | Biomedical (35M+ records) | Cross-disciplinary (>200M) | Cross-disciplinary (250M+) | arXiv + PubMed + bioRxiv + medRxiv |

| Rate limit (unauth) | 1 req / 3 sec | 3 req / sec | Shared 1k/sec pool (effectively low) | 100k req / day | Pay-per-call, no per-user throttle |

| Rate limit (with key) | Same — keys for bulk only | 10 req / sec | Per-key bucket (granted slowly) | Same | — |

| Returns full text? | Yes (XML / PDF link) | Abstract only | Abstract + selected paywall-free | Abstract + selected | Title + URL + abstract-shaped snippet |

| LLM-friendly format | No — Atom XML | No — XML | Yes — JSON | Yes — JSON | Yes — JSON, snippets pre-shaped |

| Cost | Free | Free (key recommended) | Free, key required for production | Free | 5 credits/call (~$0.005) |

What working products actually do

PaperQA2, Undermind, Elicit, ResearchRabbit, and the agentic RAG papers (e.g. Open-Source Agentic Hybrid RAG Framework, arXiv 2508.05660) all converge on a similar pattern. Three engineering moves matter most:

1. Cache by DOI / arXiv ID, not by query

The same paper gets requested by many different queries. Caching at the search-result level barely helps; caching at the paper-identifier level does. A small Redis (or even SQLite) layer keyed on doi:10.1038/... pays itself back in one afternoon of agent traffic.

2. Treat the citation graph as the slow-changing index

Semantic Scholar's strength isn't keyword search; it's the citation graph. PaperQA2 uses it to expand from one seed paper to a related cluster, not to discover papers from scratch. That's a much smaller request volume — one call per paper, not hundreds — and stays well under the rate limit.

3. Accept latency as the trade-off, OR proxy the rate limit

Either you make users wait 30-60 seconds for a careful, throttle-respecting query (PaperQA2's choice), or you add a layer that aggregates traffic across users and presents a single burst-tolerant interface to the agent (the API Pick choice). Both work. Mixing them — a low-latency UX over rate-limited public APIs without a buffer — is what fails.

Working code: a literature agent that finishes

The minimum viable agent that survives a real review session:

import requests

from anthropic import Anthropic

KEY = "pk_yourkey"

client = Anthropic()

def fetch_tool(path: str) -> dict:

return requests.get(f"https://www.apipick.com{path}/tool-schema").json()["claude"]

# Two tools: academic search + URL extract for the full body

TOOLS = [

fetch_tool("/api/search/academic"),

fetch_tool("/api/extract"),

]

SYSTEM = """You are a literature-review research assistant. Process:

1. Use academic_search with the user's question to find relevant papers

from arXiv, PubMed, bioRxiv, and medRxiv. One call usually returns

the right set of seed papers.

2. For papers worth citing in detail, use extract_urls on the paper's URL

to pull the body. Batch up to 5 URLs per call.

3. Answer the user's question with inline citations in the form

[Author Year, doi/arxiv URL]. Quote findings verbatim where possible.

4. If the search returned <3 substantive results, expand the query and

try once more. Don't loop indefinitely — say "I couldn't find a strong

answer in the indexed literature" if nothing matches.

Be precise. Cite every factual claim. Distinguish preprints (arXiv,

bioRxiv, medRxiv) from peer-reviewed (PubMed) when it matters."""

def call_tool(block):

name_to_path = {"academic_search": "/api/search/academic", "extract_urls": "/api/extract"}

r = requests.post(

f"https://www.apipick.com{name_to_path[block.name]}",

json=block.input,

headers={"x-api-key": KEY},

timeout=60,

)

return {"type": "tool_result", "tool_use_id": block.id,

"content": r.text, "is_error": r.status_code != 200}

def review(question: str) -> str:

msgs = [{"role": "user", "content": question}]

while True:

r = client.messages.create(

model="claude-sonnet-4-6",

max_tokens=4096,

system=SYSTEM,

tools=TOOLS,

messages=msgs,

)

msgs.append({"role": "assistant", "content": r.content})

if r.stop_reason == "end_turn":

return "\n".join(b.text for b in r.content if b.type == "text")

if r.stop_reason == "tool_use":

results = [call_tool(b) for b in r.content if b.type == "tool_use"]

msgs.append({"role": "user", "content": results})

print(review("Recent advances in LLM-based protein structure prediction (2025)"))Cost per question:

- 1-2 academic_search calls (5-10 credits = $0.005-$0.010)

- 1 extract call covering 3-5 papers (6-10 credits = $0.006-$0.010)

- ~5,000 input + 1,200 output tokens to Claude (~$0.04)

Round figure: ~5 cents per literature-review answer with citations. At 100 questions/day that's $5/day — about what one reasonable researcher's daily caffeine costs.

Sub-vertical tweaks

Biomedical literature

Bias the agent toward PubMed (peer-reviewed) and explicitly mark bioRxiv/medRxiv hits as preprints. When asking "what does the latest evidence say", the agent should weight peer-reviewed higher. A line in the system prompt — "If preprint and peer-reviewed sources disagree, defer to peer-reviewed and note the discrepancy" — handles this cleanly.

Mathematical / CS

arXiv dominates here. The signal-to-noise ratio is better than in biomed, and citations matter more than recency for foundational work. Search with broader queries and let the agent prune.

Drug discovery / clinical

Pair with Clinical Search (ClinicalTrials.gov + FDA labels + ChEMBL + DrugBank) for the regulatory and bioactivity dimensions that academic search can't cover. The combination — peer-reviewed literature + trial registry + structural data — is how a drug-repurposing or pharmacovigilance agent gets useful.

Where this generalises

The rate-limit problem is not unique to academic search. Any open public dataset that shipped APIs in the pre-LLM era is being subjected to the same load patterns now: SEC EDGAR, USPTO, EPO, public-records databases, weather services, government open data. The same engineering pattern works for all of them — cache at the entity level, layer a managed proxy, accept latency as the alternative when you can't afford the proxy.

The deeper shift: in 2026 it's no longer reasonable to expect a free public API to absorb LLM-agent load directly. The endpoints aren't disappearing — but they're getting wrapped by a layer of paid managed services that exists to translate "polite human researcher" rate limits into "burst-tolerant agent traffic". API Pick Academic Search is one of those layers for the literature-review use case. Expect more.

Frequently Asked Questions

Why did arXiv start rate-limiting so aggressively in 2024?

LLM-driven scrapers. arXiv staff have been explicit on their developer mailing list and on @arxiv on X about the pattern: an LLM agent fans out 50 parallel requests to fetch references for a single paper, multiply by thousands of users running similar workflows, and the whole index gets degraded. The 3-second-between-requests rule was always documented, but enforcement got strict starting in late 2024 / early 2025.

Is Semantic Scholar's 1,000 req/sec really shared?

Yes — that's the unauthenticated pool, shared across every IP that hits the public API. In practice, unauthenticated requests start failing during peak hours regardless of your individual rate. The official advice is to apply for an API key, which moves you to a per-key bucket. Application takes weeks and is granted on the assumption of academic, not commercial, use.

What about PubMed's E-utils?

Better than the others — 3 req/sec without a key, 10 req/sec with. Apply for an NCBI API key by email; usually granted same-day for stated research purposes. Still: 10 req/sec is fine for a single user but inadequate for a multi-user product where every user-question fans out to several PubMed calls.

Why does PaperQA2 work where my homegrown agent doesn't?

Three reasons. (1) PaperQA2 batches and caches aggressively — the same DOI lookup is never made twice. (2) It uses Semantic Scholar primarily for its citation graph (one call per paper) rather than as a search index. (3) It accepts a longer wall-clock per question in exchange for not exceeding limits. If you want the same UX in a product where users expect 5-second responses, you need a higher-throughput layer.

What does API Pick Academic Search actually solve?

It manages the rate limits and source coverage so your agent doesn't have to. One POST /api/search/academic call returns ranked results from arXiv, PubMed, bioRxiv, and medRxiv together, pre-shaped for LLM consumption. 5 credits per call (≈$0.005), only deducted on HTTP 200. You get to focus on the agent loop and the prompt; the throttling is our problem.

APIs used in this article

Sarah Choy is the CEO of API Pick. She writes about building production-ready APIs for AI agents and LLM workflows.